How do you retract the steps that led to the model’s creation, say, your data scientists are away for some reason?

How will you reproduce predictions to validate its outcome, say, someone shoots the question?

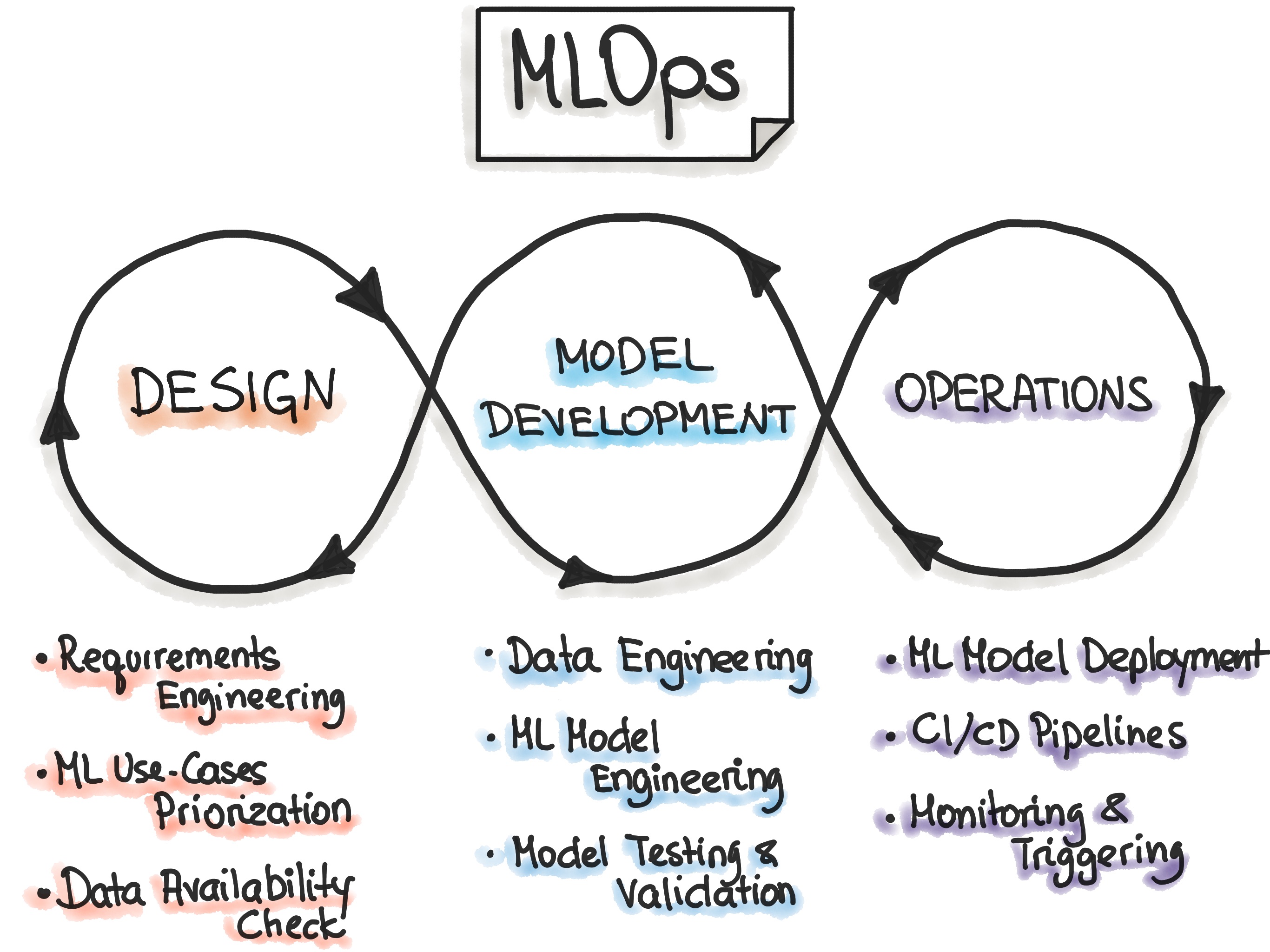

It is not just about resourcing data scientists, software developers, or data engineers to work in isolation to achieve the operationalization and automation of the ML lifecycle. It is about how the three can work in tandem as a unit. For this, the data’s quality or availability must remain identical across the process & environment to ensure the model performs on par with the set metrics. Again, the core problem boils down to operation and automation, which we diligently tried/try to address via MLOps.

To solve the problem’s crux, you first need to answer a few questions:

- How do the three personas, i.e., data engineers, data scientists, and ML engineers, use different tools and techniques?

- How do you collaborate on the ML workflow within and between teams?

As you cannot share models like other software packages, you need to share the ML pipeline that can reproduce and tune the model based on new data specific to the new environment/scenario. A ubiquitous work culture or norm in large enterprises is to have independent data science teams, and most of whom are engaged, day in and day out, on similar workflows.

- Now, how do you collaborate and share the results?

- When it comes to enterprise readiness, how do you plan data/ ML model governance while dealing with data & ML?

- When you deal with specialized hardware, cost management comes into play as you have to compute with large amounts of GPU, memory, jobs that take a long time to run. Some of these jobs can take days or even weeks to run to get a good model. So how do you establish the trust?

Having insights, even dismal, will help you identify the real-time use cases and factor in an Enterprise AI plan.

What Next? Martian Version for Earthling Solution?

ML Works Will Just Do!

Most MLOps toolkit often focus on the technical aspect of the MLOps, while ignoring its real-life impact. Other factors that can weigh in its contribution are having a 360-degree view and control on the micro/macro aspects of the data science process.

At Anteelo, we have tried reordering the ML alphabets with our proprietary suite of toolkits, in which we take immense pride. We call it ML Works. The solution, which is cloud-agnostic and scalable, automates the model’s build, deploy, and monitoring processes, thereby reducing the need for larger teams.