Why AI?

You may have heard the terms analytics, advanced analytics, machine learning and AI. Let’s clarify:

- Analytics is the ability to record and playback information. You can record the travels of each vehicle and report the mileage of the fleet.

- Analytics becomes advanced analytics when you write algorithms to search for hidden patterns. You can cluster vehicles by similar mileage patterns.

- Machine learning is when the algorithm gets better with experience. The algorithm learns, from examples, to predict the mileage of each vehicle.

- AI is when a machine performs a task that human beings find interesting, useful and difficult to do. Your system is artificially intelligent if, for example, machine-learning algorithms predict vehicle mileage and adjust routes to accomplish the same goals but reduce the total mileage of the fleet.

If you’re in travel and transportation, here’s how to make sense of the terms analytics, advanced analytics, machine learning and AI.

AI is often built from machine-learning algorithms, which owe their effectiveness to training data. The more high-quality data available for training, the smarter the machine will be. The amount of data available for training intelligent machines has exploded. By 2020 every human being on the planet will create about 1.7 megabytes of new information every second. According to IDC, information in enterprise data centers will grow 14-fold between 2012 and 2020.

And we are far from putting all this data to good use. Research by the McKinsey Global Institute suggests that, as of 2016, those with location-based data typically capture only 50 to 60 percent of its value. Here’s what it looks like when you use AI to put travel and transportation data to better use.

Here’s what it looks like when you apply industrialized AI in travel and transportation.

Take care of the fleet

Get as much use of the fleet as possible. With long-haul trucking, air, sea and rail-based shipping, and localized delivery services, AI can help companies squeeze inefficiencies out of these logistics-heavy industries throughout the entire supply chain. AI can help monitor and predict fleet and infrastructure failures. AI can learn to predict vehicle failures and detect fraudulent use of fleet assets. With predictive maintenance, we anticipate failure and spend time only on assets that need service. With fraud detection, we ensure that vehicles are used only for intended purposes.

AI combined with fleet telematics can decrease fleet maintenance costs by up to 20 percent. The right AI solution could also decrease fuel costs (due to better fraud detection) by 5 to 10 percent. You spend less on maintenance and fraud, and extend the life and productivity of the fleet.

Take care of disruption

There will be bad days. The key is to recover quickly. AI provides the insights you need to predict and manage service disruption. AI can monitor streams of enterprise data and learn to forecast passenger demand, operations performance and route performance. The McKinsey Global Institute found that using AI to predict service disruption has the potential to increase fleet productivity (by reducing congestion) by up to 20 percent. If you can predict problems, you can handle them early and minimize disruption.

Take care of business

Good operations planning makes for effective fleets. AI can augment operations decisions by narrowing choices to only those options that will optimize pricing, load planning, schedule planning, crew planning and route planning. AI combined with fleet telematics has the potential to decrease overtime expenses by 30 percent and decrease total fleet mileage by 10 percent. You cut fleet costs by eliminating wasteful practices from consideration.

Take care of the passenger

The passenger experience includes cargo — cargo may not have a passenger experience directly but the people shipping the cargo do. Disruptions happen, but the best passenger experiences come from companies that respond quickly. AI can learn to automate both logistics and disruption recovery. It can provide real-time supply and demand matching, pricing and routing. According to the McKinsey Global Institute, AI’s improvement of the supply chain can increase operating margins by 5 to 35 percent. AI’s dynamic pricing can potentially increase profit margins by 17 percent. Whether it’s rebooking tickets or making sure products reach customers, AI can help you deliver a richer, more satisfying travel experience.

Applied AI is a differentiator

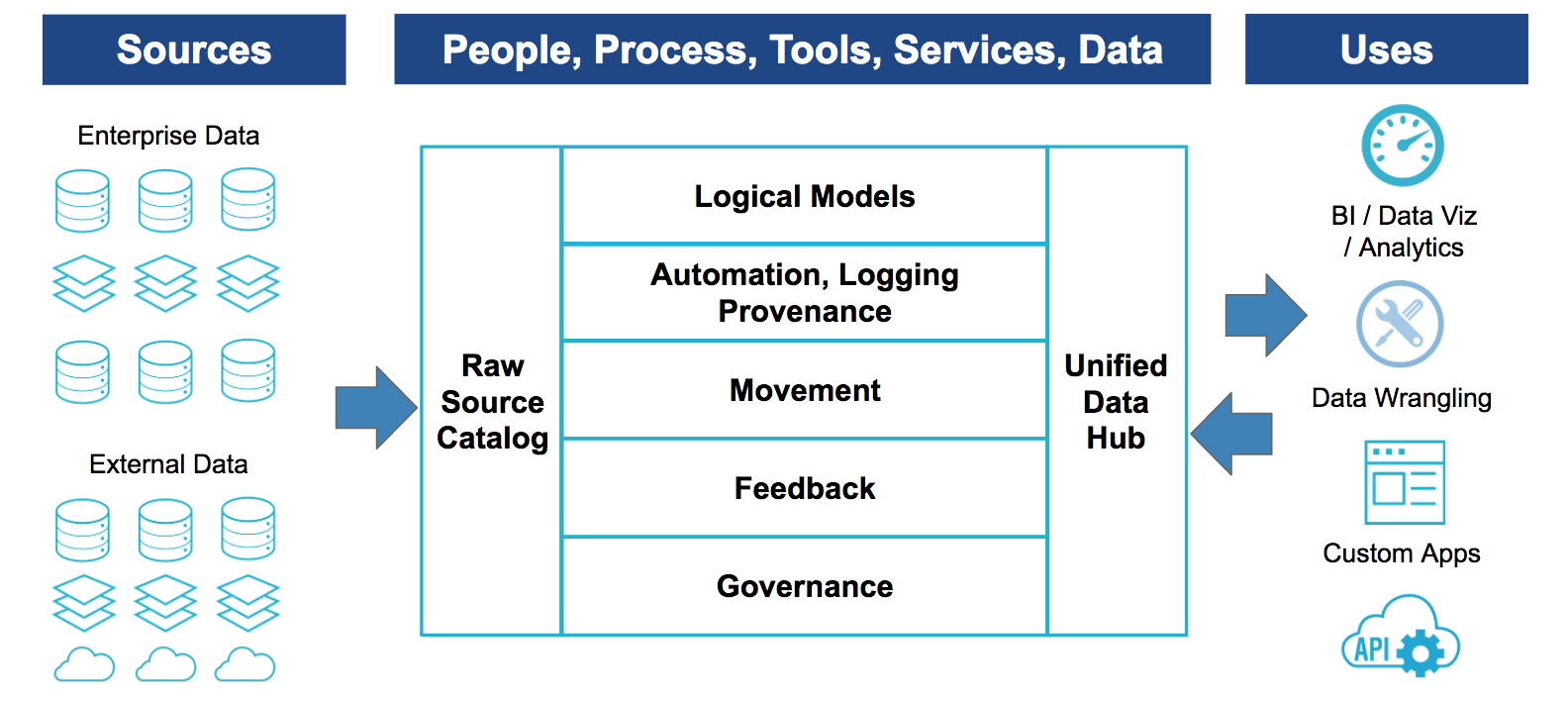

If we see AI as just technology, it makes sense to adopt it according to standard systems engineering practices: Build an enterprise data infrastructure; ingest, clean, and integrate all available data; implement basic analytics; build advanced analytics and AI solutions. This approach takes a while to get to ROI.

But AI can mean competitive advantage. When AI is seen as a differentiator, the attitude toward AI changes: Run if you can, walk if you must, crawl if you have to. Find an area of the business that you can make as smart as possible as quickly as possible. Identify the data stories (like predictive maintenance or real-time routing) that you think might make a real difference. Test your ideas using utilities and small experiments. Learn and adjust as you go.

It helps immensely to have a strong Analytics IQ — a sense for how to put smart machine technology to good public use. We’vefit built a short assessment designed to show where you are and practical steps for improving. If you’re interested in applying AI in travel and transportation and are looking for a place to start, take the Analytics IQ assessment.