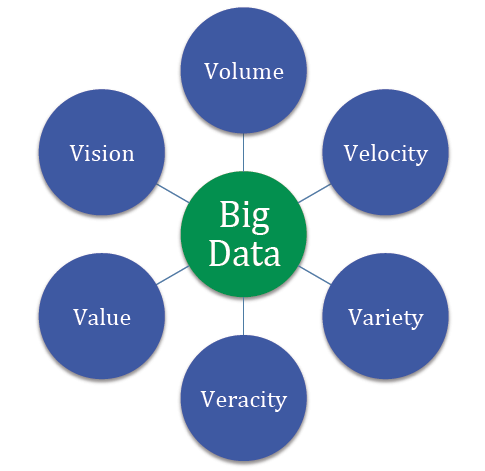

Our world is driven by big-data and in this world, dashboards are of utmost significance for providing users with information in just one glance.

Like the dashboard in a car, businesses also employ and utilize dashboards to summarize large chunks of real-time data in a limited space. The information is broken down in a way such that it doesn’t become visually pressurising. This representation of information allows you to measure your data and pick out the areas with scope for improvement. If done right, dashboard designs can bring about a visible difference in the business’ performance.

Although designing is fun, but, designing a dashboard is not a piece of cake. Rather, it is a quite challenging task. Despite having those inner-creative desires, it is best not to unleash all of your creative streak whilst designing a dashboard. It’s better to keep it in control before it goes out of hands.

KNOW YOUR AUDIENCE

Dashboard is assumed to be allabout data, but that’s not true. It is about information and enabling the users to make smart business decisions. The sole aim of designers is to create a dashboard that makes the users feel powerful. That is how hearts and minds are won.

And in order to do so, you have to know your users. Take a seat, find out what information do they need, what is their field of interest and what is their objective. Once you research and gather all of the required information, you can move forward to begin your work on the dashboard.

Furthermore, you should design dashboards keeping in mind only ONE user. Your dashboard should be providing information according to that single user. In case of multiple users, different dashboards should be designed for the users.

HIERARCHY

While designing a dashboard, it is best to have informational hierarchy, i.e., organising information in a way such that it makes sense to the users. Remember, information placement is not about filling up empty spaces or about aesthetic reasons. Key information should be placed such that it shines different and is easily caught by the users.

The most significant piece of information related to the user’s primary goal should always appear first. Followed by that should be supportive material which creates context for the forthcoming main content. For the users to make right decisions, it is important that you showcase the relationships between data. This instills a lot more sense into the data than when it was placed randomly. For example, if it is a dashboard for fashion, then you should see the graphs for the hot trends along with the outgoing fashion sales.

LOGICAL DATA GROUPING

This is one obvious point. You should group the required pieces of data in a way that it is clear to the users, that it makes sense. For instance, if you’re designing a dashboard for a cosmetic brand, don’t place the allergy complaints next to the sales data. It just ruins things even further.

It is best not to follow the usual best designing approach when it comes to dashboard designing. Generally in a website, we place the brand logo on the top-left and navigation options right below that or on the top-right. But as a fact, we humans read from left to right, top to bottom. So it is best advised to place the most crucial piece of information on the top-left corner. Decide wisely.

BE MINIMALIST

Using extra information to please your user will only turn out to be a headache for the user followed by a headache for you. It is always better to keep it minimal, crisp and to the point.

Whilst you’re planning on how to present the information to your user, ask yourself this- what will the user get out of this piece of information? It really works.

Now that you know the placing, try not to clutter too much content on the dashboard. It will only make a mess, which will be for you to clean up. Use minimal text. You would definitely want to avoid unnecessary images and graphics, especially to prevent visual noise. Even if it makes you wonder, simpler, user-friendly design can carry high functionality. And that is, only if the right approach is followed.

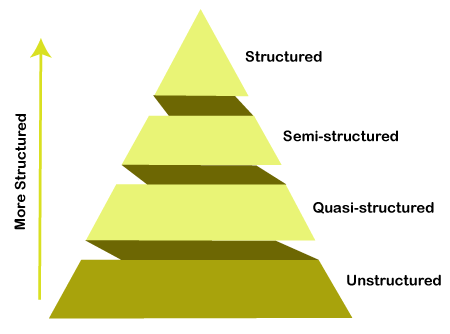

DATA VISUALIZATION, THE RIGHT WAY

Data visualization is not merely for the eyes, rather it is a significant part of the dashboard design. Raw data can be hard to process and can have a monotonous tone. The purpose of visualization is to refine the raw data and present the relevant information in a short span of time and at the same, allows users to gain more information, if they wish to do so. Pie-charts, graphs, plots assist users to interpret and better understand the data. However, don’t go overboard with it, you might end up terrorizing your users.

BE WISE, COLOUR-WISE

Colours are great, they make everything better. Likewise, they make data interpretation and analysis much easier for the users. You must choose your alert and button colours wisely, so that your users get notified of tasks, activities, events and features in your interface. Best way is to create a style guide on which you can base your data state.

Dashboards are there for informing righteously, not for experimenting with a colour palette.

WHEN TO REFRESH YOUR DATA

Data refreshing is important, as it helps in prioritising and placing the elements. For instance, if a data is refreshed often, it implies that the data holds a crucial role. Hence, it is best to place that information in the beginning, at a place where the user can catch it at the earliest possible.

Simultaneously, it is important that you notify your users when the data is/was refreshed. And whether they need to refresh the data manually.

Planning data presentation, especially deciding what to exclude, is truly a SCIENCE. Data alone lacks context and meaning, that is where the designers come in. They take the responsibility of making the data understandable and representable. Dashboards are so crucial for a business, they can make or break your product/application.

Thus, along with being creative towards the work you love, make the right choices as well.