Airline workers have it tough.

A new generation of voice-driven software bots promises to make their work easier.

Airline employees, whether pilots, flight attendants or maintenance/repair/overhaul (MRO) technicians, are often called on to perform challenging tasks — and in a hurry. Think of a pilot dealing with mechanical failure, a flight attendant who can’t make a connection due to bad weather or a technician urgently needing a crucial part that’s out of stock.

To help solve these tough challenges in real time, a new generation of “voicebots” leverages two advanced approaches:

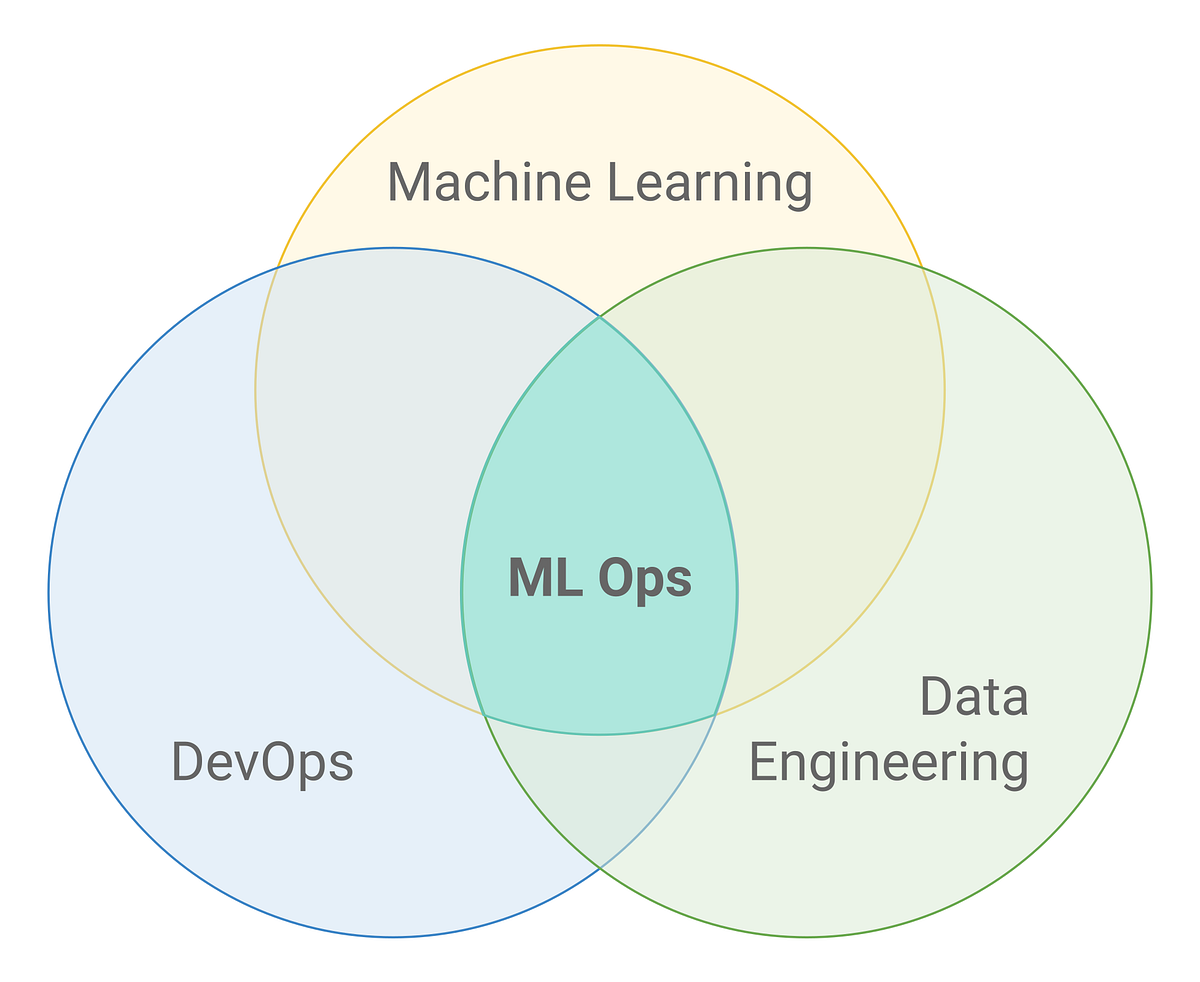

- The first, natural language processing (NLP), lets machines and humans interact using “natural” (that is, human) languages.

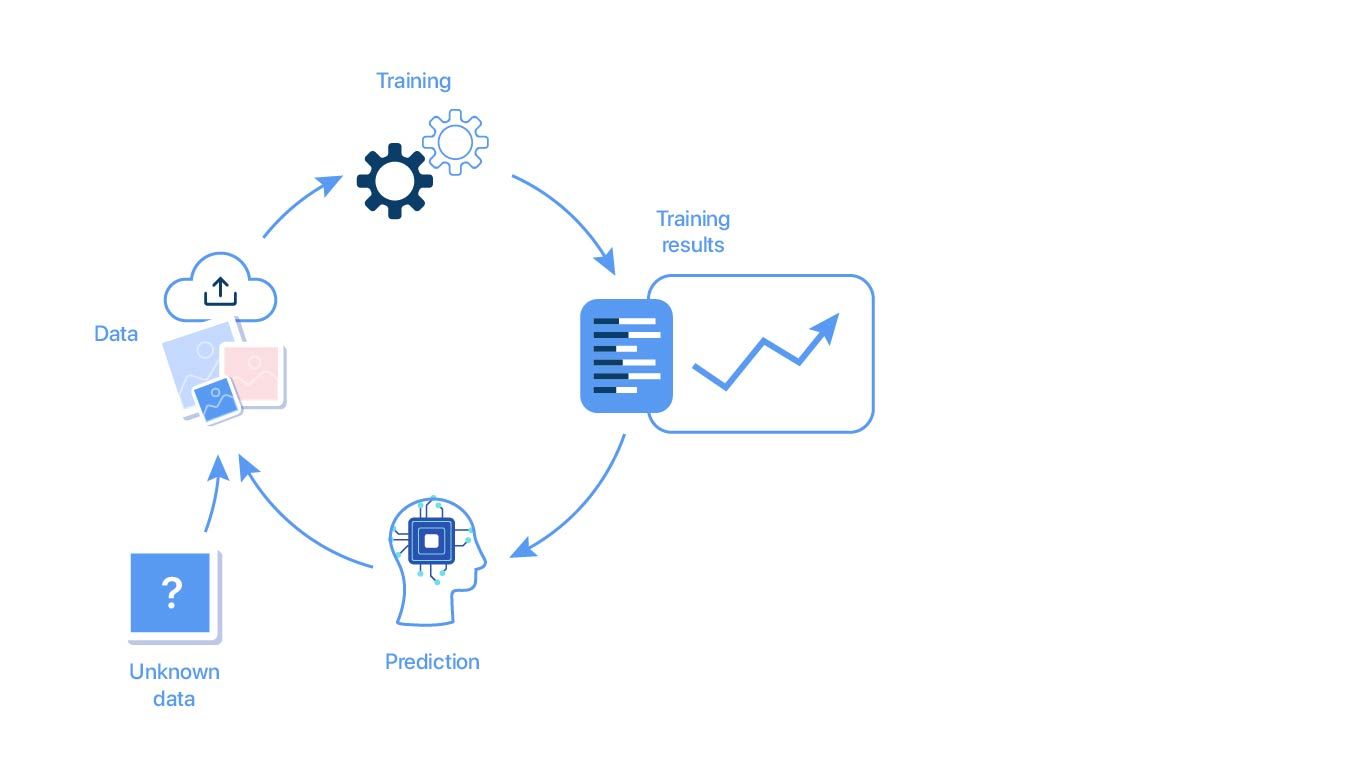

- The second, machine learning (ML), is a subset of AI that empowers computer systems to build mathematical models based on observed patterns.

Voicebots eliminate the need to type, click or point. Instead, a worker can simply speak normally, then listen as the voicebot speaks back in response. What’s more, the latest voicebots can actually detect a speaker’s mood – for example, a sense of urgency – and then use that information to prioritize requests, such as ordering a new part.

Voicebots can also deliver important business benefits to the enterprise. For one, they empower airlines to automate tasks formerly done by hand, then expedite them based on priorities detected in a speaker’s voice. This can help airlines ease disruptions and delays, as well as lower costs and reallocate those savings to new and innovative projects. Imagine, for example, an airline that uses voicebots to ensure more efficient maintenance. If it could lower the number of flight delays by just 0.5%, the airline would enjoy total annual cost savings of $4 million to $18 million, depending on the number of daily flights.

By implementing this cutting-edge technology, airlines should also have an easier time attracting and retaining tech-savvy workers, possibly helping to mitigate the labor shortages forecast for the industry.

Voice technology soars — in the air and on the ground

Voicebots for airline workers are part of the bigger trend of voice technology that consumers are already on board with. For example, market-watcher IDC predicts consumers worldwide will purchase more than 144 million voice-enabled smart speakers this year. The business market is ripening, too. Amazon, Google and Microsoft are all dedicating serious resources to expanding their voice technologies for B2B use.

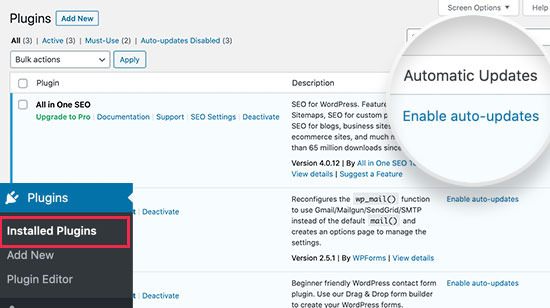

Some airlines already use AI-powered chatbots to serve their customers. These chatbots can be programmed to understand the intent behind a customer’s request, recall an entire conversation history and respond to requests in a human-like way.

On the enterprise side, aircraft-maker Boeing is among manufacturers investing in AI and other voicebot technologies. The company is conducting research on NLP, speech processing, acoustic modeling, language modeling and speech recognition.

Real-life scenarios

How will airline employees benefit from using voicebots? Here are a few possible applications:

- Pilots can use voicebots, both during preflight preparations and while actually flying. A complicated command from air-traffic control can take pilots up to 30 seconds to complete, turning all the knobs and hitting all the necessary buttons. A speech-recognition system can cut that time dramatically, allowing pilots to keep their eyes on the traffic and weather, and to keep the airplane safe.

- MRO technicians can use voicebots to assist maintenance and repairs. A technician needing to replace a specific component could ask a voicebot, “Do we have this part in stock?” If the answer is negative, the bot could then find the nearest location where the part is available and arrange for it to be shipped. The voicebot could even select Express or Standard delivery based on the urgency detected in the mechanic’s voice.

- Flight attendants can use voicebots when encountering flight delays, cancellations and other common scheduling changes. For example, a flight attendant who is snowbound in Denver could tell a voicebot, “Notify Dallas that I’m going to miss my connecting flight today. Then find someone who can fill in for me on the next flight.” The airline’s crew-scheduling system could then make the necessary changes in real time.

Getting started

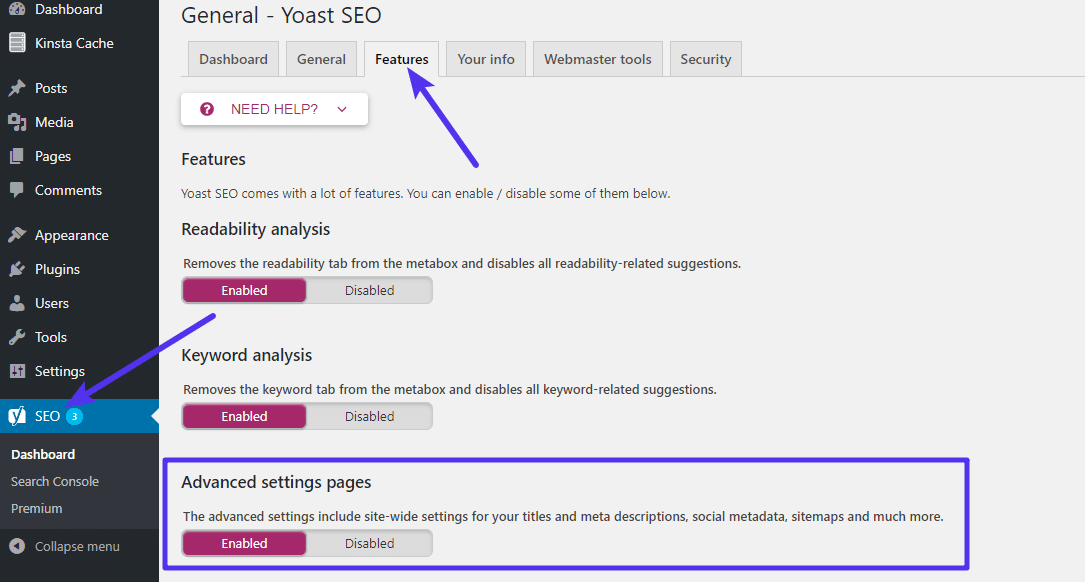

Airlines looking to equip their employees with voicebots may wonder how to begin. We suggest a three-step process:

Step 1: Ideation. Begin by brainstorming. Assemble your team and ask them: What are our biggest disruptions? How could voice technology help?

Step 2: Proof of concept. With your biggest disruptions in mind, develop a potential solution using voicebots.

![]()

Step 3: MVP. Borrow a tactic from the Agile approach — create a minimum viable product. This does not need to be a perfect, complete piece of software. Instead, create just enough for early tests and feedback. Then repeat as needed.

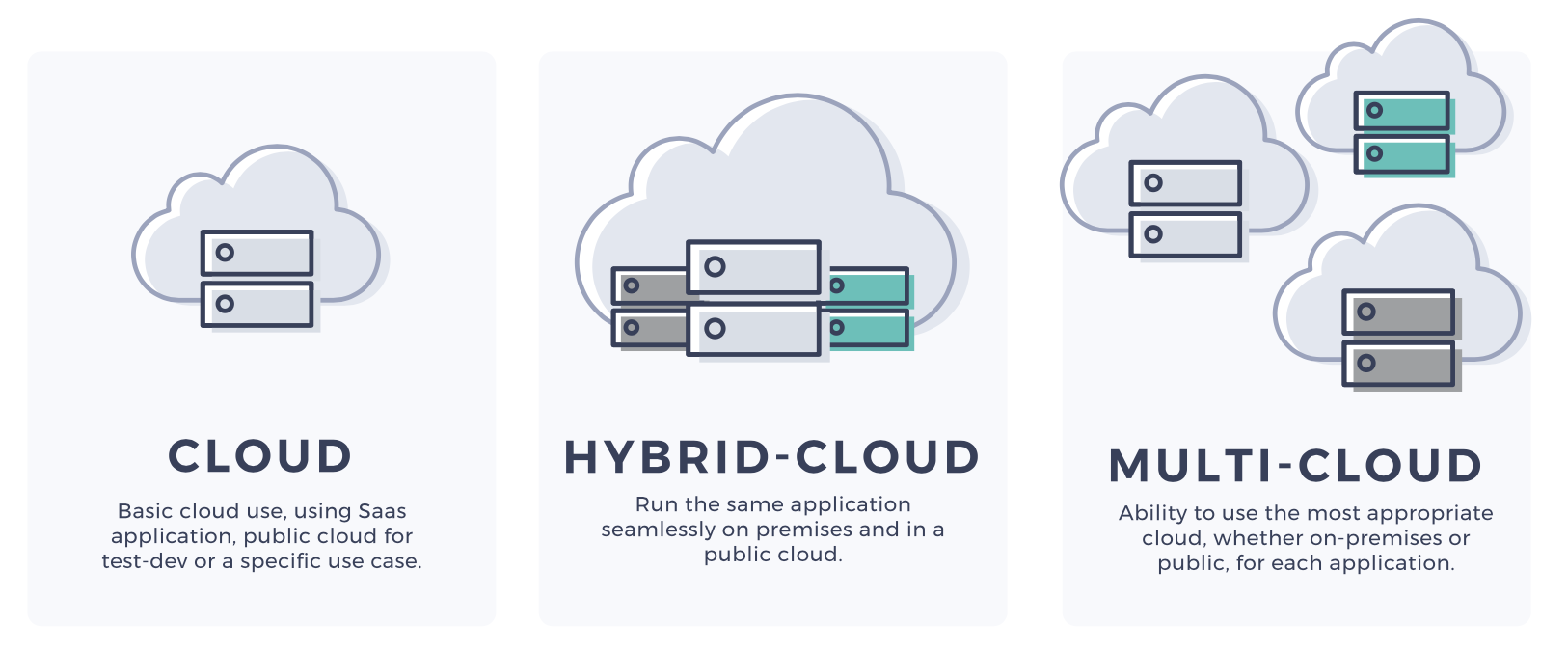

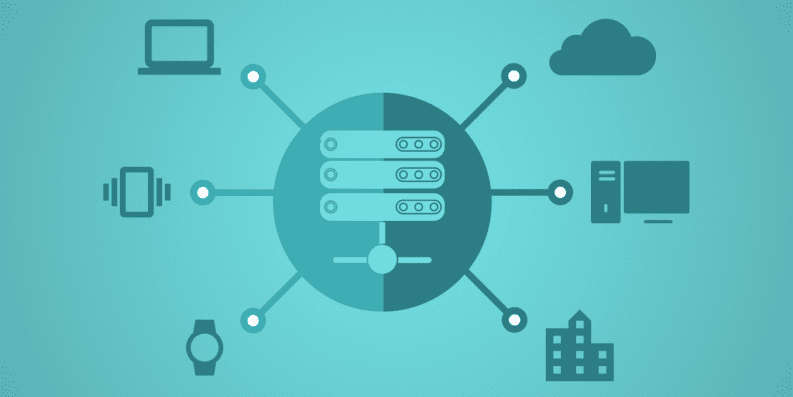

Airlines looking to employ voicebots will also need to take on one more challenge: data access. Voicebots need quick access to all enterprise data. Yet many airlines keep their data protected in silos, mainly for security reasons. For voicebots, that makes gaining access to this data difficult and slow.

To resolve this issue, airlines need to find an acceptable balance between data security on the one hand and speedy voicebot data access on the other. This could be hard. But the alternative — doing nothing — could is even worse. Any airline that doesn’t adopt voicebots can be sure the competition will.